A research team from Adobe, Stanford, and Berkeley unveiled a new large-scale generative model that inserts people into photos in a realistic way. It takes an image of a person and integrates it into a marked area of a scene, while maintaining consistency and harmony in the overall composition.

The model, which is introduced in the paper “Putting People in Their Place: Affordance-Aware Human Insertion into Scenes”, has the ability to infer authentic postures based on the context of the scene, readjust the position of the person, and create a realistic and pleasing scene image.

It was trained in a self-supervised manner, on a large dataset of 2.4 million video clips containing people in different scenes, using images of 256×256 pixels resolution.

The model’s quantitative evaluation demonstrated its superior capability to generate a more realistic human appearance and more authentic human-scene interactions than prior methods.

At inference time, the model can be prompted to perform different tasks, including:

- Conditional generation using a reference person (the ability to generate realistic images of a person inserted into a scene, based on a reference image)

- Person hallucination (the ability to generate new images of people without a reference image of a person)

- Scene hallucination (the ability to generate new scenes given a reference image of a person)

- Partial body completion and cloth swapping (replacing the clothing worn by a person)

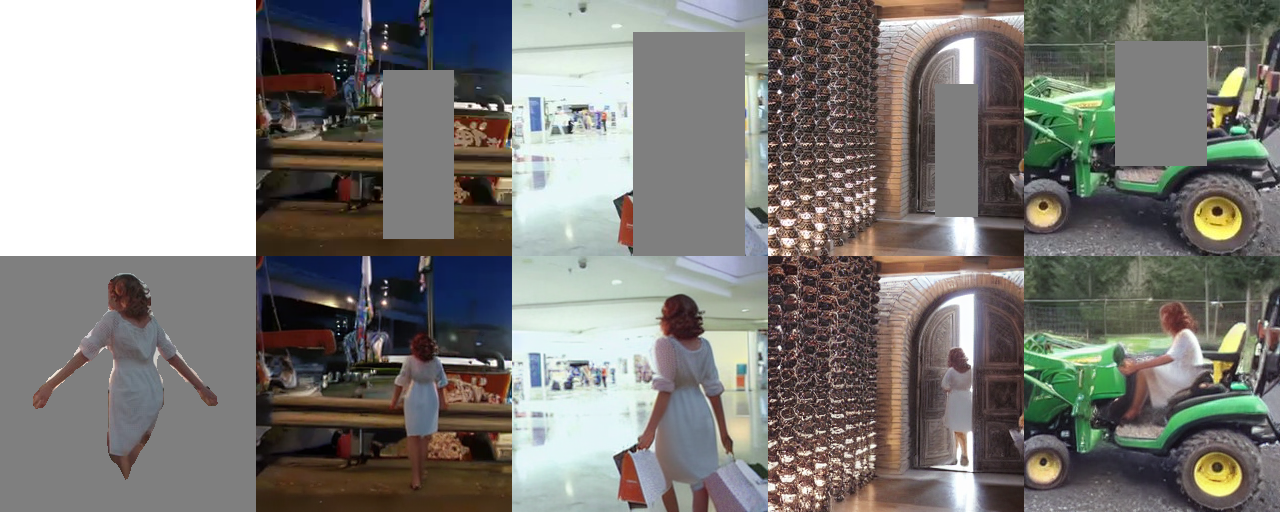

Here is a person realistically inserted in different scenes.

The method

Picture below shows an overview of the method.

There are 5 main components in the learning framework:

- The input that consists of two random frames from a video clip containing the same person: the masked frame and the reference frame. The first step is to mask out the person in the first frame. This creates a “hole” in the image that needs to be filled in. The second step is to crop and center the person in the reference frame.

- The masked image is converted by the autoencoder (VAE) into a smaller, latent space of 32x32x4 pixels resolution. This reduces the complexity of the input data and helps the model to learn more efficiently.

- The latent feature of the background image, a noisy image, and a rescaled mask are concatenated. The mask indicates which parts of the image are missing or corrupted.

- The encoder (CLIP) extracts the relevant features from the reference person, further used to condition the denoiser (UNet) via cross-attention.

- The denoiser algorithm selectively removes the unwanted noise and artifacts while preserving the important features of the scene and the inserted person, resulting in a more realistic and visually coherent composite image.

- The autoencoder (VAE) transforms the target image into a latent space, which is then compared to the predicted latent image.

Through this pipeline, the model generates a photo-realistic human image that closely resembles the reference person and repositions it to fit naturally within the input scene, filling in the previously masked region, or “hole.”

The training

The model was trained on a large dataset of video clips, with a resolution of 256×256 pixels, to perform tasks such as human insertion into scenes. During training, the model minimized the difference between the generated images and a separate set of ground truth images that were sourced from the same video frames.

It was also trained without the person-conditioning input, or without both the masked image and person-conditioning inputs, in order to learn a full unconditional distribution and support classifier-free guidance.

Evaluation tests & results

The model demonstrates the ability to produce realistic images of people and scenes when only one of these elements is present in the input.

It can also handle partial human completion tasks, such as changing poses or clothing pieces, and generate new images of people without any reference image.

Conclusion

This study presents a novel approach for inserting humans into scenes, which considers the surrounding environment.

The effectiveness of this method is demonstrated through qualitative results, suggesting potential applications in computer vision, graphics, and robotics.

Further research could evaluate the scalability of this approach by testing it on larger and more diverse datasets.

Learn more:

- Research paper: “Putting People in Their Place: Affordance-Aware Human Insertion into Scenes” (on arXiv)

- Project page (on GitHub)