Mistral AI has introduced Leanstral, a specialized AI coding agent designed to create and verify formal mathematical proofs.

Leanstral is the first open-source coding agent designed for Lean 4 that generates and refines proofs, helping ensure that implementations align with their intended requirements. Lean 4 is a proof assistant used by programmers to write and verify mathematical proofs and to formally demonstrate that programs meet their specifications.

Such precision is highly useful in areas where accuracy is essential, such as secure cryptography, safety-critical software for aerospace and medicine, and advanced mathematical research.

Key features

- Built for proof engineering: It is specifically designed to master the syntax of Lean 4 and the strict logic required to verify that code is mathematically correct.

- Agent-based architecture: Unlike a standard chatbot, it operates in a loop (Search-Verify-Refine) to solve multi-step logical problems.

- 6B parameters, small enough to run on standard hardware: Because it is smaller and more specialized, it can perform the repetitive “trial and error” steps required for math proofs much faster than a massive, general-purpose AI.

- Trained on realistic repositories: It was trained on actual formal repositories (like the Lean community’s massive mathematical library, Mathlib).

- 32k context window: The large context allows it to read long files of definitions and previous theorems to solve complex problems. In formal math, you often need to reference definitions from 500 lines earlier in a file. A small context window would forget the rules of the problem halfway through.

Leanstral can work with different Model Context Protocol (MCP) through Mistral Vibe , which allows it to connect to various external data sources. Furthermore, it was specially trained to reach its highest level of performance when using the lean-lsp-mcp. This specific connection allows Leanstral to talk directly to the Lean 4 programming tools, helping it understand mathematical code and errors much more accurately. Leanstral is released under the Apache 2.0 license with fully open weights.

The model

Leanstral is a sparse mixture-of-experts (MoE) model with an estimated ~120B total parameters, of which ~ 6B are active during inference. It consists of a large pool of 128 experts, with only a small subset, typically 4 experts, selected for each input. A routing mechanism dynamically activates the most relevant experts for each token, allowing the model to maintain a very large overall capacity while using only a small portion at a time, thereby improving computational efficiency without compromising performance.

Evaluation results

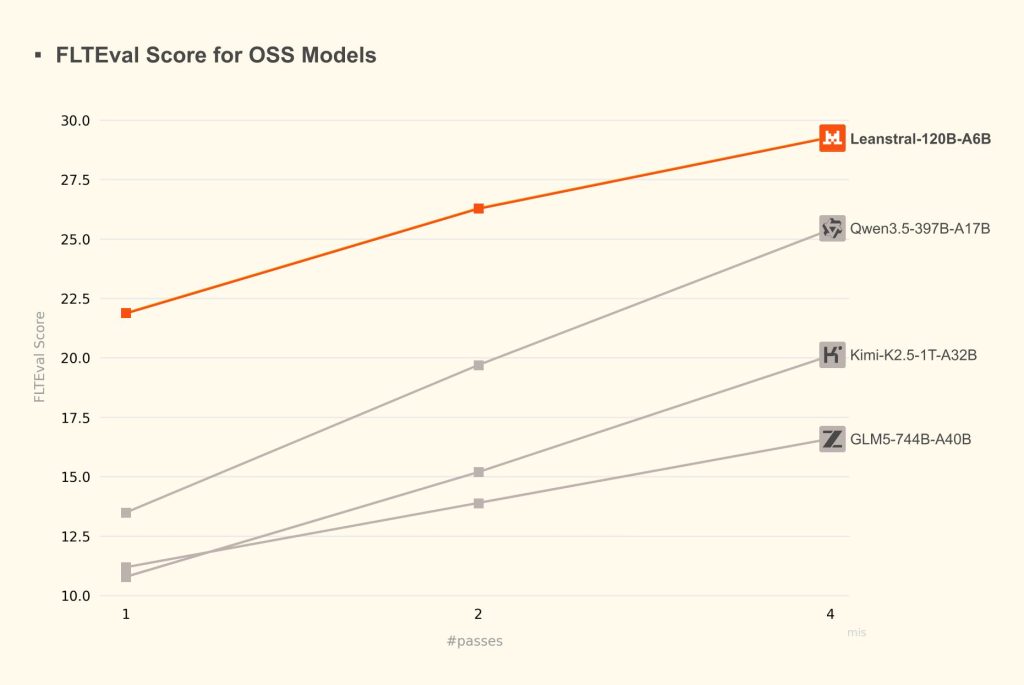

Mistral introduced a new benchmark suite called FLTEval, designed to measure performance on real-world proof engineering tasks rather than isolated problems. It is based on the Fermat’s Last Theorem (FLT) project at Imperial College London, a collaboration led by Professor Kevin Buzzard and funded by the EPSRC through 2029.

Leanstral was compared against leading coding agents (Claude Opus 4.6, Sonnet 4.6, Haiku 4.5) and open-source models (Qwen3.5 397B-A17B, Kimi-K2.5 1T-A32B, GLM5 744B-A40B). It outperforms larger models such as GLM5 and Kimi-K2.5, achieving higher scores with fewer computational passes (see the picture below).

Even Qwen3.5-397B-A17B, the strongest open-source competitor in the comparison, achieves a score of 25.4 after four attempts, whereas Leanstral reaches a higher score of 29.3 at the same cost level.

The table below presents a comparison of cost-efficiency among leading coding agents (Claude Opus 4.6, Sonnet 4.6, and Haiku 4.5) relative to Leanstral. The data show that Leanstral achieves equivalent benchmark performance to Sonnet 4.6 and Haiku 4.5 with significantly lower execution costs. Although Claude Opus 4.6 attains a higher score of 39.6, its execution cost is approximately 92 times that of performing two runs with Leanstral, highlighting Leanstral’s superior efficiency and cost-effectiveness in practical deployment.

| Model | Cost ($) | Score |

|---|---|---|

| Haiku | 184 | 23.0 |

| Sonnet | 549 | 23.7 |

| Opus | 1,650 | 39.6 |

| Leanstral | 18 | 21.9 |

| Leanstral pass@2 | 36 | 26.3 |

| Leanstral pass@4 | 72 | 29.3 |

| Leanstral pass@8 | 145 | 31.0 |

| Leanstral pass@16 | 290 | 31.9 |

Case studies

- Fixing breaking changes in Lean code: When a new Lean version breaks existing code, Leanstral can reconstruct the failing environment, diagnose the root cause, and suggest fixes. For example, it identified that using def blocked the rewrite tactic due to a mismatch in how a type alias was interpreted and recommended replacing it with abbrev, which resolved the issue.

- Translating between logic languages: In this study, Leanstral proved its ability to translate complex logic across different programming ecosystems. They provided the model with definitions from Rocq (formerly Coq), a popular logic tool used in a Princeton University computer science course. Leanstral successfully converted these definitions into Lean 4.

How to access Leanstral

- Mistral Vibe (Zero-Setup): For immediate use, you can activate the model within Mistral Vibe by typing /leanstall. You can then toggle the model by pressing Shift+Tab until it appears, or simply run the command vibe –agent lean.

- Labs API: Developers can access the model via a specialized API endpoint (labs-leanstral-2603). This endpoint is currently free or low-cost to encourage community feedback and gather data for future verified code models.

- Open source: For those who prefer to run the model locally on their own hardware, the model weights are available for download on Hugging Face.

Conclusion

Leanstral is an efficient open-source code agent designed for Lean 4. It solves a major problem in high-level math: the human bottleneck. Checking complex proofs by hand takes a massive amount of time and effort. Leanstral speeds this up by creating formal proofs from scratch, checking them for errors automatically, and improving them until they are logically perfect.

Because it focuses on absolute correctness, it is used in high-stakes fields such as cryptography, safety-critical software, and advanced mathematics.

Read more:

- Release announcement: “Leanstral: Open-Source foundation for trustworthy vibe-coding”

- Hugging Face