A research team from the University of Maryland, College Park proposed an AI model to reconstruct a 3D picture of what a person is looking at, even if the scene is not in front of the camera. This technique can tell us what people are seeing and where their attention is focused on. It can also be used for recovering 3D scenes that are hidden from the camera’s view.

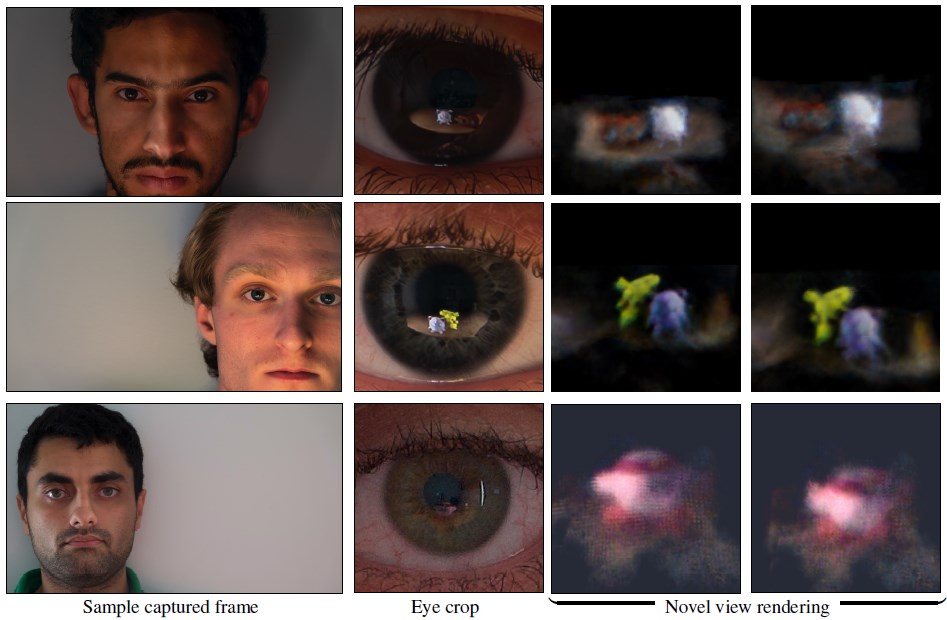

The researchers tested their method on a diverse set of eye images, encompassing both real and synthetic examples, featuring individuals with different eye colors. Through these experiments, they demonstrated that the method can reconstruct the 3D image of the scene from eye reflections.

The human eye model

The human eyes function as a pair of lenses, focusing light onto the light-sensitive cells located in the retina, which enable us to perceive visual images (see the picture below).

When we look at someone else’s eyes, we also see the light that bounces off their cornea. The shape of the eye modifies the reflected image that we perceive.

Similarly, when we take photos of someone’s eyes, the camera lens captures the light that bounces off their eyes. These photos preserve valuable information about the scene or object that the person was looking at.

Method

The team aimed to reconstruct the 3D scene observed by a person using monocular image sequences captured from a fixed camera position.

They identified some challenges including the inherent noise in cornea localization, the complexity of iris textures, and the low-resolution nature of the captured reflections in each image. To address these challenges, the researchers followed three main steps:

- Cornea pose estimation (it estimates the location and orientation of the cornea for each image)

- Radiance field reconstruction (it reconstructs the radiance field of the scene)

- Iris texture refinement (it refines the iris texture)

Step 2 (the radiance field reconstruction) is detailed in the picture below:

The light rays coming from the camera hit the cornea and bounce off the eye instead of going straight from the camera to the scene. This is because the eye acts like a mirror that reflects the scene.

The bounced light rays have a different origin and direction (eye) than the original ones (camera), so they have to be adjusted. At the same time, the iris texture can interfere with the reflected scene and has to be separated.

To solve this problem, the team trained two neural networks:the eye texture field (for the iris texture) and the radiance field (for the reflected scene).

The eye texture field is a neural network that learns the iris pattern from the eye image. It takes the projection of the bounced light ray origin on the eye coordinate system and outputs an estimated eye texture. The network is trained to reconstruct the iris part of the eye image while ensuring smoothness and consistency of the iris texture across various images.

The radiance field is a neural network that uses a ray tracing technique (how light rays bounce off the eye and enter the camera). It learns the color and density of each point in the scene based on the light rays that pass through it. The output (a vector with color and density) helps to reconstruct the image through volumetric rendering.

There are two loss functions used to train the eye texture field network:

- Lrecon: This loss function compares the reconstructed eye image with the original image. It measures the pixel-wise difference between the two images and penalizes any errors or inconsistencies. The lower the value of Lrecon, the better the quality of the reconstructed eye image.

- Lradial: This is a way of making sure that the eye texture (the iris pattern) looks smooth and consistent across different images. Lradial helps to avoid mixing up the eye texture and the reflected scene.

The outputs of the two neural networks are blended to reconstruct the cornea image.

Training

The training data in this research consists of both synthetic data and real-world data.

The authors train two networks in their method: a cornea pose estimation network and a radiance field reconstruction network.

- The cornea pose estimation network is trained on synthetic data generated by rendering realistic eye images with different eye colors, head poses, and scene reflections.

- The radiance field reconstruction network is trained on real-world data collected by capturing portrait images of 10 subjects with different eye colors and skin tones in indoor and outdoor environments.

Evaluation

The authors tested their method on synthetic and real-world eye images that contain scene reflections. They used a synthetic dataset of an indoor scene with a realistic eye model, a real-world setup with multiple objects, and a DSLR camera.

They showed that it has the ability to manage different eye colors and lighting conditions, and can withstand certain imperfections in the cornea and camera positions without a substantial decrease in the quality of reconstruction.

Conclusion

This research shows us that the human eye is a source of information about the world that we usually ignore or miss, but it can reveal more about the 3D scenes beyond the camera’s line of sight.

Learn more:

- Research paper: “Seeing the World through Your Eyes” (on arXiv)

- Project page